Continuous Integration Server Jenkins

In this post we would like to present Jenkins, a continuous integration tool that is part of the Cloudogu EcoSystem. We will introduce Jenkins and continuous integration in general and special circumstances of Jenkins as part of the Cloudogu EcoSystem.

Jenkins Server

As mentioned, Jenkins is a continuous integration tool which was first released in 2005. In the beginning it was developed as the Hudson project, but in 2010 an issue arose in the Hudson community and consequently Jenkins was forked in 2011. The development of Hudson was continued by Oracle. Nowadays Jenkins is being developed by Cloudbees.

Efficient software development pipeline

Jenkins simplyfies the integration of changes into projects by providing a continuous integration system. It automates the build process and therefore increases the productivity, because developers are getting a response to their changes very fast. The installation and configuration is very easy. There is a large number of plugins available that you can use to customize your instance of Jenkins the way you need it.

Quality through continuous integration

Continuous integration (CI) is the practice of frequently integrating code changes with the main repository by merging all developer working copies with the shared mainline several times a day. The aim is to prevent a problem known as “integration hell”:

Developers work on a local copy of the main repository. Whenever someone pushes changes to the main repository these need to be integrated. This is no problem as long as no other developer had changed the code in the meantime, but in case the code has changed it is necessary to check whether the new push is effected by the changed code. If a lot of changes were made the developer is in the integration hell: he needs to spend a lot of time to integrate his changes to the revised main repository.

To wrap it up: CI ensures that developers are always working on code that isn´t “outdated” and changes are integrated in small steps.

Today CI is often implemented by using a build server to implement continuous processes for quality control which involve unit- and integration- as well as static- and dynamic tests, measurement and profiling of performance and extract and format documentation from the source. These measures have two aims:

- improvement of the software quality

- reduction of software delivery time

Continuous Integration Principles

If you want to use Continuous integration you should stick to its principles. Those are also wrapping up very good what CI is really about:

- Maintain a code repository: All artefacts required to build the project should be placed in the repository. In this practice the convention is that the system should be buildable from a fresh checkout and not require additional dependencies. The baseline (or trunk) should be the place for the working version of the software.

- Automate the build: A single command should have the capability of building the system. Automation of the build should include automating the integration, which often includes deployment into a production-like environment.

- Make the build self-testing: Once the code is built, all tests should run to confirm that it behaves as the developers expect it to behave.

- Everyone commits to the baseline every day: By committing regularly, every committer can reduce the number of conflicting changes. Checking in a week’s worth of work runs the risk of conflicting with other features and can be very difficult to resolve. Early, small conflicts in an area of the system cause team members to communicate about the change they are making. Committing all changes at least once a day is generally considered part of the definition of continuous integration. In addition performing a nightly build is generally recommended.

- Every commit (to baseline) should be built: The system should build commits to the current working version in order to verify that they integrate correctly. For many people, continuous integration is synonymous with using automated continuous integration where a continuous integration server monitors the version control system for changes, then automatically runs the build process.

- Keep the build fast: The build needs to complete rapidly, so that if there is a problem with integration, it is quickly identified.

- Test a clone of the productive environment: Having a test environment can lead to failures in tested systems when they deploy in the production environment, because the production environment may differ from the test environment in a significant way. However, building a replica of a production environment is cost prohibitive. Instead, the pre-production environment should be built to be a scalable version of the actual production environment to both alleviate costs while maintaining technology stack composition and nuances.

- Make it easy to get the latest deliverables: Making builds readily available to stakeholders and testers can reduce the amount of rework necessary when rebuilding a feature that doesn’t meet requirements. Additionally, early testing reduces the chances that defects survive until deployment. Finding errors earlier also, in some cases, reduces the amount of work necessary to resolve them.

- Everyone can see the results of the latest build: It should be easy to find out whether the build breaks and, if so, who made the relevant change.

- Automate deployment: Most CI systems allow the running of scripts after a build finishes. In most situations, it is possible to write a script to deploy the application to a live test server that everyone can look at. A further advance in this way of thinking is continuous deployment, which calls for the software to be deployed directly into production, often with additional automation to prevent defects or regressions.

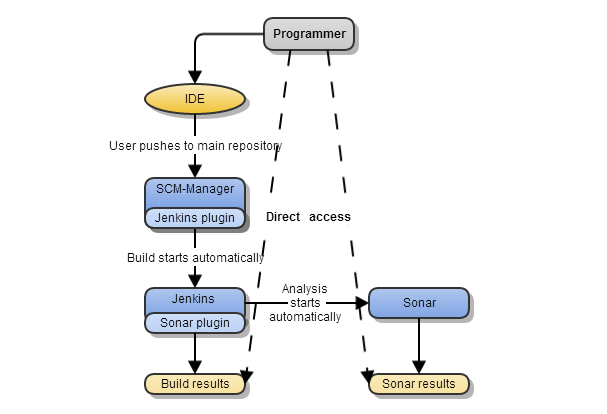

Jenkins integration with SonarQube and SCM-Manager

We integrated Jenkins in the Cloudogu EcoSystem, because we want to provide the basics for continuous processes or continuous integration. In our EcoSystem Jenkins is connected to SCM-Manager and the static code analysis tool SonarQube.

This workflow enables you to implement a CI process, because some of the principles are already considered:

- maintain a code repository: at SCM-Manager the baselines are stored centralized

- automate the build: Jenkins automatically executes the build process and tells the developer whether the programm is buildable, or not

- every commit should be build: every push to SCM-Manager triggers Jenkins and the build is executed

- everyone can see the results of the latest build: everyone in the team has access to the results and can be notified automatically

The rest is up to you! Most of the other principles are in the hands of the users and the infrastructure: a build can only be self-testing if there are automated tests implemented, it is up to the developer how often he commits to the baseline, if no clone of the productive environment is available it can´t be tested, it´s up to the organization to make the deliverables accessible, if there is no test server available all the time, the deployment can´t be automated and the script for auto deployment needs to be written.

Try the toolchain with Jenkins yourself

If you’re already using Cloudogu EcoSystem, you simply have to install the Jenkins Dogu. During the setup, all the necessary configurations will be done automatically. If you don’t have an EcoSystem of your own yet, you should get an appointment for a live demo with us right away.

Get your personal product demonstration with us. In only 30 minutes we will answer all your questions and show you the installation and operation of the Cloudogu EcoSystem.

Note: On Oct. 2nd we adapted the post to Cloudogu EcoSystem

- referring to http://en.wikipedia.org/wiki/Continuous_integration#Software (Jul. 2013)